Here is my note for paper “Fencing off Go: Liveness and Safety for Channel-Based Programming” when presenting it in our weekly paper reading group on 02/17/2017.

Please do not hesitate to correct me if I am wrong anywhere. Thanks in advance. 🙂

BTW, I also found the slides from author. Check it out.

02/23/2017 Update: Great thanks to Prof. Naoki Kobayashi for generously sharing his thoughts and correcting some errors in this note!

Why picked this paper

This paper is picked while iterating the papers list in POPL’17.

First of all, I need to admit that I’ve not written programs in Go before. Actually I’d like to take this chance to know more about the concurrency model in Go language. One year ago I wrote about the shared memory part of Go. At that time I thought Go only has simple constructs in such concurrency consistency model, now I realize that I was seriously wrong, Go could be focusing more on the channel-based message-passing style concurrency.

Another minor reason for picking this paper is because of word “fencing”. It’s only after reading the full paper that I realized “fencing” is not talking about the “fences/barriers” I am more familiar with.. I’ve never used phrase “fence off” (把…用栅栏隔开) before.. 😓

Problem & Contribution

According to the paper, for the channel-based message-passing style concurrency in Go language there is only runtime deadlock detector, but no compile time support on checking communication mismatch and partial deadlocks. The former is what they encode as “liveness” property, and the latter is their “channel safety” property.

Intuitively, the “communication mismatch” means every read/write must be “synchronized” with some other write/read, i.e., a WRITE should be read and a READ should reads from some WRITE.

The channel-safety property is simpler – channel is closed at most once, and closed channel cannot be used for output (writing to that channel), while input from closed is allowed, just returning default value.

This is to conform with semantics in Go. Originally, I was thinking they provide some verification framework for general liveness and safety predicates. That’s not the case in this paper. Actually, I am wondering how difficult it would be to extend to general predicates. 🤔

In this paper, they complement this compile time support. Essentially, a behavioral type sytem defined and extracted from source code such that if Safety (or Liveness) property holds in the behavioral type layer, Safety (or Liveness) property holds (may hold) in source code layer.

For channel-safety property, type level safety ⇒ source code level safety. While this may not necessarily hold for liveness property. We will see that later on.

They claim to support “dynamic channel creation”, “unbounded thread creation”. I suspect that “dynamic thread creation” is not supported at the moment, because I didn’t find corresponding semantics terms. But this may not be a big issue since analysis of Go source code could prepare this information beforehand.

Example – Prime Sieve

Before delving into details, let’s look at the Prime Sieve algorithm as an example demonstrating the entire workflow.

There is a very good visualization for Prime sieve algorithm in wikipedia. Basically, every prime number removes all future numbers that divdes this prime number.

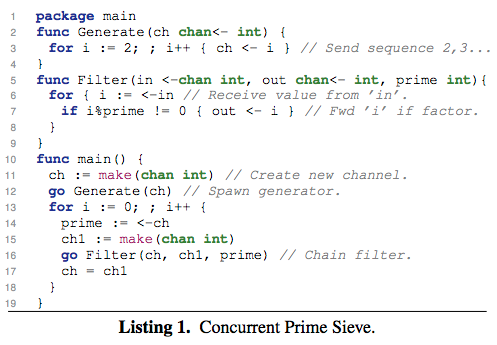

In Go, the concurrent channel-based version could be written as:

where

Generate()will be a Go Routine that keeps generating new numbers.Filter()will be a Go Routine transporting those numbers from input channel to output channel that is not dividable by a prime number.main()will be iterating all prime numbers, and use the filtered channel as new input channel.

To reason about liveness and channel-safety properties of this algorithm, this paper will

- Infer a calculi from Go source code called

MiGo, covering all channel-based concurrency usages. - Extract a behavioral type system for the calculi.

- When it type checks, verify the liveness/safety properties at type level. The link between type-level and calculi level liveness/safety properties is proved in the paper.

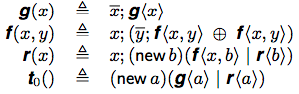

Regarding the prime sieve example, the extracted behavioral types would be:

where

t0is the entry point of program.- $\bar{x}$ means writing to channel

xwhile $x$ means reading from channelx. g(x)is for generator function, which writes something to channelxand recursively calls itself.f(x,y)is for filtering function, which first reads from channelxand then conditionally writes to channelybefore recursively calling itself.new bmeans creating a new channelb.- $\oplus$ is branching / choice operator.

|is for parallel composition.

For this example, it can be shown that all 4 types are fenced (we will explain shortly). And they can be shown to hold the liveness and safety properties by unfolding the behavioral type semantics.

Behavioral Types

Above is a quick view of extracted behavioral types. Frankly, I have not learned about this term before. According to survey Behavioral Types in Programming Languages:

A recent trend in current research is to use behavioral type theory as the basis for new foundations, programming languages, and software development methods for communication-intensive distributed systems.

Behavioral type theory encompasses concepts such as interfaces, communication protocols, contracts, and choreography.

Roughly speaking, a behavioral type describes a software entity, such as an object, a communication channel, or a Web Service, in terms of the sequences of operations that allow for a correct interaction among the involved entities.

And the authors seem to be experts in this area – they appear in the authors list of this survey and one tutorial cited in the paper. BTW, session types seem to be one kind of behavioral types.

Verification of Behavioral Types

As you can see, the defined behavioral type system is no longer simply carrying the type of payload data, it encodes certain behaviors in the type system. This makes it possible to define operational semantics on types.

Consequently, liveness / safety properties could be verified by the further defined operational semantics. In paper, this workflow is formulated as:

- If the symbolic executions of each Type holds the liveness / channel-safety property,

- then the property holds at the Behavioral Type level,

- then the property holds (maybe, in case of liveness) at the calculi level,

- then the property holds at the source code (because calculi covers all channel-based concurrency).

This 4 levels implication chain is my current comprehension of their entire approach workflow.

Fenced

However, with the straightforwardly defined behavioral types, it may not be decidable (proved long long ago). Thus a subset of types needs to be identified which could make it decidable. That is their fenced types.

// 02/23/2017: Thanks Prof. Naoki for sharing his thoughts and correcting some errors here!

Below is my Revised Understanding:

The semantics of their Types is defined in Fig. 5 using “types as Calculus of Computing Systems (CCS) processes” interpretation. The resulting Labeled Transition System (LTS) provides a state space to explore, which is possibly infinite and thus undecidable.

To resolve this, they define in Fig. 7 a Symbolic Semantics for Types, which can explore only a finite subset of the possibly infinite state space which could therefore make it decidable. This finiteness guarantee is made possible by additional parameters k and N, where k is the bound on steps (e.g. k-liveness / k-safety) and N is the bound on used channel names.

Regarding

k: Whenkis infinity, ∞-liveness/safety will again need to explore the possibly infinite state space. So ∞-liveness/safety is undecidable.Regarding

N: This bound is ensured by “Fenced”. My current intuition about their “Fenced” definition is elaborated as below:In semantics rules, they require in Def. 4.1 that:

types featuring parallel composition have a “finite memory” w.r.t. the names over which they can recurse.

For types without parallel composition, there is no restriction because this whole thing is regulating concurrent behaviors.

With parallel composition, they require channel names used in recursion to be “finitely many”, i.e., it’s fine to create new channels during recursion, as long as some same-amount old channel names are removed from scope and garbage collected. Therefore, the overall “spots” remains unchanged, and may “reduce to same state in LTS”. Through α-equivalence and structural congruence (see Proof for Lemma 4.1 in extended version paper), even if there are infinitely many channel names being created, they can reduce to finitely many states (with

k-bounded).

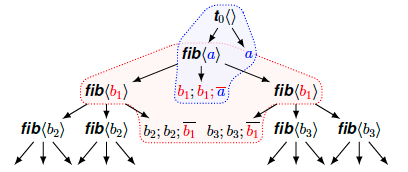

Let’s look in some visualized examples in addition to textual explainations. Intuitively, “having finite memory of channel names during recursion” implies no channel names should appear infinitely often. In other words, they appear in some fenced region. This is why they call it “fenced types”.

Below is one true fenced type diagram:

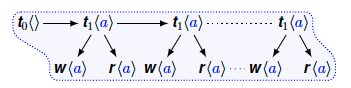

The blue region is where channel a appears. The red region is where channel b1 appears. In the following wrong fenced type diagram:

channel a appears infinitely often, it’s not fenced.

Special Cases Regarding Liveness

We mentioned earlier that channel-safety property can flow from behavioral type level to calculi level while this is not necessarily the case for liveness property.

This is because Type System is actually an approximation of code / calculi.

For the case of liveness, programs typically rely on data values to guide their control flow (e.g. in conditional branches) which are abstracted away at the type level.

For example, the liveness property may depend on exactly one branch in if conditional statement. If the if condition is always true or always false, the liveness property may not hold.

In the paper, they identify 3 special cases where liveness at the behavioral type level can extend to liveness at the calculi level:

- May Terminate

- Without infinitely running conditionals

- Non-deterministic conditional

I am not going into details of these here.

My Thoughts

Apparently, the key step in this paper is to successfully apply behavioral types.

I don’t know much about those type systems, and may not be doing type-system related works in foreseeable future. But in my shallow understanding, the verification effort still exists there, but now transfers from source code level to behavioral type level.

Hence, my doubt is how much would it gain to do such symbolic execution analysis at the type level? Maybe it becomes more powerful with additional type information? The authors do mention doing model checking with the defined operational semantics. Why is it better to do such thing in type level remains to be my overall question.

If the type system continues to approximate more and more of code, could we eventually apply concurrency logics (e.g. Rely/Guarantee) in the very rich type system? I’d be very interested if that is possible.

Besides, as mentioned earlier in the note, I am also wondering how difficult it would be to extend the current livenss / channel-safety properties to general predicates?

🤔